Scalable Data Solutions: 3 Steps to Future-Proof Data

Why Data Scalability is Critical for Business Survival

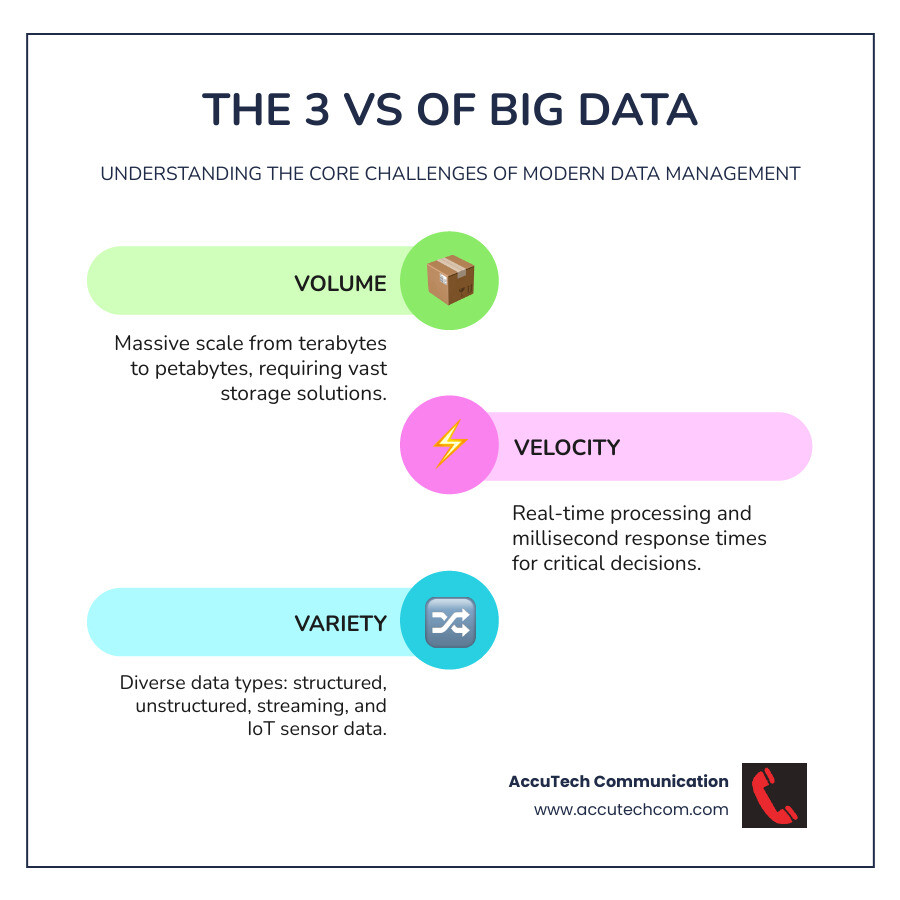

Scalable data solutions are systems designed to handle exponential growth in data volume, velocity, and variety without compromising performance. They adapt to increasing demands using distributed architectures, cloud technologies, and flexible storage.

Key Components of Scalable Data Solutions:

- Storage: Data lakes, cloud object storage, NoSQL databases

- Processing: Distributed frameworks (Hadoop, Spark), real-time analytics

- Architecture: Horizontal scaling, load balancing, automated resource allocation

- Infrastructure: High-speed networking, redundant systems, professional cabling

Organizations today face an unprecedented data explosion. While modern platforms enable companies like Pfizer to process data 4x faster, many businesses still rely on legacy systems that create bottlenecks and limit growth.

The challenge isn’t just storing more data; it’s processing it fast enough for real-time decisions in sectors like healthcare, finance, and manufacturing. Without scalable solutions, businesses risk hitting storage limits, suffering from reduced performance, and facing steep costs for emergency upgrades.

The solution is designing systems that grow with your business by expanding horizontally, leveraging cloud resources, and building on a rock-solid physical infrastructure.

I’m Corin Dolan, owner of AccuTech Communications. With over 30 years of experience building reliable network infrastructures, I’ve seen how professional planning and implementation can transform a company’s ability to handle exponential data growth with scalable data solutions.

Scalable data solutions vocab explained:

Why Scalability is Non-Negotiable for Modern Businesses

Many businesses experience a sudden slowdown in their systems as data accumulates, turning minute-long reports into hour-long ordeals. This happens when they haven’t prepared for explosive data growth. If your scalable data solutions aren’t ready for this expansion, you’re already falling behind.

The famous “3 Vs” of Big Data explain the challenge: Volume (massive amounts of information, from terabytes to petabytes), Velocity (real-time data streams from websites and sensors), and Variety (diverse data types, from structured spreadsheets to unstructured social media posts and videos).

For small and medium businesses, these challenges are amplified by limited resources. When systems hit performance bottlenecks, it affects everything from customer service to inventory. The high operational costs of constantly upgrading outdated systems can quickly drain a budget.

The Challenge of Exponential Data Growth

The data deluge isn’t slowing down. Traditional data management and legacy systems were built for predictable data volumes, not the tsunami we face today. When you risk hitting limits, databases slow down, reports time out, and your team spends more time waiting than working. This reduced performance leads to slower decisions, frustrated employees, and missed opportunities.

Worst of all, emergency upgrade costs are expensive and disruptive, hitting when you can least afford them. Proper planning avoids these premium-priced urgent fixes.

The Strategic Value of Scalability

Scalability provides a solid foundation for growth. When your data infrastructure can expand smoothly, your business benefits in several key ways:

- Business agility: Pivot quickly when markets change, launch new products, or expand into new territories without being held back by your data systems.

- Cost-effectiveness: Invest in systems that grow efficiently, paying for what you need when you need it, rather than funding massive, reactive overhauls.

- Data-driven decision making: Empower your team with fast, reliable insights instead of having them make decisions based on outdated information.

- Future-proofing: Build an infrastructure that is ready for future technologies like AI and machine learning.

- Enabling innovation: Free your team from fighting with technical workarounds so they can focus on developing new services and improving customer experiences.

As experts at Reforge note in The Data Scaling Framework: 3 Steps to Scalable Organizations, building scalable data solutions is a strategic imperative that supports your entire business vision. Without it, even the best ideas can collapse under their own success.

Core Components of a Scalable Data Architecture

Building scalable data solutions requires a thoughtful architecture with four pillars: storage, processing, analytics, and security. Each component must be designed to scale, as a bottleneck in one area can cripple the entire system. For insights on building this foundation, see our guide on Data Center Infrastructure Design.

Storage: The Foundation for Your Data

Modern storage offers incredible flexibility. Key options include:

- Data lakes: Store vast amounts of information in its raw format, allowing for flexible analysis later.

- Cloud object storage: Provides a highly scalable and cost-effective backbone for most data lakes.

- NoSQL databases: Offer flexibility for varied data types and are designed to scale across multiple servers.

- Distributed storage systems: Spread data across clusters of computers for improved resilience and scalability.

- Cloud data warehousing: Optimized for fast analysis of structured data and can scale instantly.

The choice between cloud and on-premises storage is critical. Cloud offers pay-as-you-grow pricing and limitless scalability, while on-premises provides full control but requires significant upfront investment. A hybrid approach is often the best solution.

| Feature | Cloud Storage | On-Premises Storage |

|---|---|---|

| Cost | Pay-as-you-go, lower upfront, operational costs | High upfront capital expenditure, maintenance costs |

| Scalability | Near-limitless, on-demand, elastic | Limited by physical hardware, requires manual upgrades |

| Control | Managed by provider, less direct control | Full control over hardware and software |

| Maintenance | Handled by provider | Requires in-house IT team and resources |

| Flexibility | Access from anywhere, various service models | Restricted to local network, custom configurations |

Processing and Integration: Turning Raw Data into Insights

Raw data must be processed to become useful. Distributed processing frameworks like Hadoop and Spark are essential for handling massive datasets by spreading work across many machines. For immediate insights, such as fraud detection, real-time stream processing technologies like Apache Kafka are necessary.

Big data integration combines information from diverse sources. Modern platforms unify data ingestion, storage, change, and visualization, reducing complexity and costs as noted by experts on data integration tools. ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform) pipelines are the workhorses that move and prepare data for analysis.

Analytics and Intelligence: Empowering Decisions

This is where data becomes actionable. Advanced analytics, including Machine Learning (ML) and Artificial Intelligence (AI), uncover patterns and predict future trends, enabling companies like Pfizer to process data 4x faster. Decision intelligence applies these analytics to improve business choices, while self-service dashboards democratize data access, allowing users to get answers without waiting for IT.

Security and Governance: Building a Framework of Trust

Data security and governance are non-negotiable. Data protection and compliance with regulations like GDPR and CCPA are critical, requiring tools like encryption and anonymization. Access control ensures only authorized personnel can view specific data.

Data governance policies define responsibilities and maintain data quality. Finally, physical security for your data center infrastructure, including professional cabling, is essential for protecting data at its source. This highlights The Importance of Professional Cabling Installation for Your Business in your overall security strategy.

Designing and Implementing Scalable Data Solutions

Building scalable data solutions requires a systematic approach, starting with a deep understanding of business needs before selecting technology. Over my 30 years in this field, I’ve seen that success comes from careful planning, not rushing into flashy tech.

Best Practices for Designing scalable data solutions

A successful design begins with a thorough needs assessment. Start with your business goals to define what problems you need to solve. Key considerations include:

- Technology Stack: The cloud vs. on-premises decision depends on your budget, control requirements, and growth plans. Similarly, choosing between open-source and proprietary software depends on your team’s expertise and support needs.

- Data Modeling: A logical organization of your data is crucial. This involves creating conceptual, logical, and physical models to prevent chaos as data grows.

- User Needs: The solution must be usable. Understand your team’s daily challenges and technical comfort levels to ensure the system is effective.

Before designing, always clarify current data sources and volumes, future growth projections, real-time processing needs, user roles, and reporting requirements.

Optimization Strategies for Peak Performance

Ongoing optimization is key to maintaining performance as data grows. Essential strategies include:

- Data Indexing: Creates shortcuts to speed up data retrieval, turning minute-long queries into seconds-long ones.

- Data Compression: Saves storage space and improves performance by reducing the amount of data moving across your network. Formats like Parquet can cut storage needs by 80% or more.

- Load Balancing: Distributes workloads across multiple servers to prevent any single one from becoming a bottleneck.

- Scaling Strategy: A scale-out approach (adding more servers) is generally preferred over scale-up (powering up one server) for its flexibility and fault tolerance.

- Performance Monitoring: Continuously track CPU, memory, and network latency to identify and resolve issues before they impact users.

The Bedrock: Reliable Physical Infrastructure

Software and cloud platforms rely on a solid physical foundation. An inadequate network or data center can cripple even the best architecture.

- Data Center Build-Outs: Require expertise in power, cooling, and cable management to support current needs and future expansion.

- High-Speed Connectivity: Inadequate cabling creates bottlenecks. Our Network Cabling Installation ensures your network can handle modern data flows. For the most demanding applications, Fiber Optic Installation provides superior speed and bandwidth.

- Latency Reduction: Optimized network paths and high-quality cabling are critical for real-time applications where milliseconds matter.

- Data Center Cooling: Prevents server overheating, which causes failures and slowdowns. Effective cooling protects your investment and ensures consistent performance.

Cutting corners on physical infrastructure always costs more in the long run.

The Future of Data: Emerging Trends and Technologies

The world of scalable data solutions is constantly evolving. Understanding emerging trends helps you prepare for what’s next.

The Internet of Things (IoT) is a major driver of data growth. Millions of smart devices and industrial sensors generate continuous data streams that require scalable infrastructure to collect, store, and analyze in real-time. This is essential for applications like smart cities and Industry 4.0 manufacturing.

Multi-cloud and hybrid-cloud strategies are now the norm. Businesses spread workloads across different cloud providers and on-premises systems to gain flexibility, avoid vendor lock-in, and use the best tools for each job. This approach allows companies to maintain control over sensitive data while leveraging the scalability of the cloud.

Decentralized data networks, using technologies like Ceramic, are exploring distributed systems where no single entity controls the data. This emerging trend promises to improve data provenance and integrity, reducing reliance on centralized platforms.

Automation and AI-driven operations are rapidly expanding in data management. Systems can now automatically provision resources, optimize queries, and predict hardware failures. This frees up IT teams to focus on strategic initiatives instead of routine maintenance.

The pace of change demands continuous learning and adaptation. Organizations that invest in ongoing education and build teams that can adapt to new technologies will have a significant advantage. As noted in the Blueprint for Scalability: Tackling Exponential Data Growth, scalability is a strategic imperative for navigating the data explosion.

The future belongs to businesses that can turn data into insights. With the right scalable foundation, including robust physical infrastructure, your organization can be ready for any technological advance.

Frequently Asked Questions about Data Scalability

When exploring scalable data solutions, businesses often have similar questions. Here are answers to the most common ones I’ve heard over 30 years at AccuTech Communications.

What is the difference between scaling up and scaling out?

This is a choice between making one system more powerful versus adding more systems to a network.

- Scaling up (vertical scaling) involves adding more resources (CPU, RAM, storage) to a single server. It’s simple to manage but has a hard physical limit. If that single server fails, your entire operation stops.

- Scaling out (horizontal scaling) involves distributing the workload across multiple servers. This is the foundation of modern scalable data solutions. It offers virtually limitless growth potential and fault tolerance—if one server fails, others pick up the slack without disruption.

For most big data applications, scaling out is the superior long-term strategy.

How do I choose between a data lake and a data warehouse?

These serve different purposes, and many businesses use both.

- A data lake is a vast storage repository for raw data of all types (structured and unstructured). It uses a “schema-on-read” approach, meaning you structure the data when you need it for analysis. This flexibility is ideal for data science and machine learning exploration.

- A data warehouse stores cleaned, structured, and processed data. It uses a “schema-on-write” approach, where the data format is defined upfront. This makes it perfect for business intelligence (BI), generating consistent and reliable reports for decision-makers.

Many organizations use a data lake for raw data exploration and a data warehouse for polished, business-ready reporting.

What are the first steps for an SME to build a scalable data solution?

For a small or medium-sized business, the task can feel daunting, but you can start effectively with a focused approach.

- Conduct a Needs Assessment: Start with specific business problems. What decisions could be improved with better data? Focus on a high-impact area first.

- Start Small, Think Big: Begin with a pilot project for a single use case. This allows you to prove value and learn on a smaller scale before making larger investments.

- Leverage Cloud Services: Cloud platforms offer enterprise-grade scalability without a massive upfront cost. You can start small and pay as you grow.

- Prioritize Data Quality: Garbage in, garbage out. Implement processes to ensure your data is clean and reliable from the start.

- Plan for Governance: Establish rules for data ownership, access, and security early on. This foundation is invaluable as you grow.

The key is starting with a solid foundation in both your data strategy and your physical infrastructure. Professional planning and implementation are critical for building scalable data solutions that truly grow with your business.

Conclusion

Building scalable data solutions is about positioning your business for long-term success. The explosive growth in data Volume, Velocity, and Variety can either be an obstacle or a competitive advantage. The choice depends on adopting a holistic approach to your data infrastructure.

This journey involves choosing the right storage solutions and processing frameworks, but it doesn’t stop there. Your analytics capabilities, security measures, and governance policies must work together to create a system that is scalable, trustworthy, and compliant.

Often overlooked is the critical importance of physical infrastructure. The most advanced cloud architecture will fail if the underlying network cabling or data center environment can’t handle the load. At AccuTech Communications, we have been building these essential physical foundations for businesses in Massachusetts, New Hampshire, and Rhode Island since 1993. We provide certified, reliable service for data center build-outs and structured cabling systems that are ready for the future.

As technology evolves with IoT, multi-cloud strategies, and AI-driven automation, a robust foundation becomes even more critical. By partnering with experts who understand both the digital and physical aspects of data management, you are future-proofing your business.

Scalability is a journey of continuous adaptation. Ready to build a robust physical foundation for your data? Explore our professional Data Center Build Out Services and learn how we can help turn your data challenges into competitive advantages.