Why Data Center Connectivity Matters for Your Business

Data center connectivity refers to the networking infrastructure and solutions that enable communication between data centers, cloud platforms, and end users. At its core, it’s the foundation that keeps your business running smoothly in today’s digital world.

Quick Answer: What is Data Center Connectivity?

- Definition: Private network connections between data centers using fiber optics, cross-connects, or dedicated circuits

- Purpose: Enables high-speed, low-latency data transfer between facilities and cloud services

- Key Benefits: Better performance, enhanced security, business continuity, and scalability

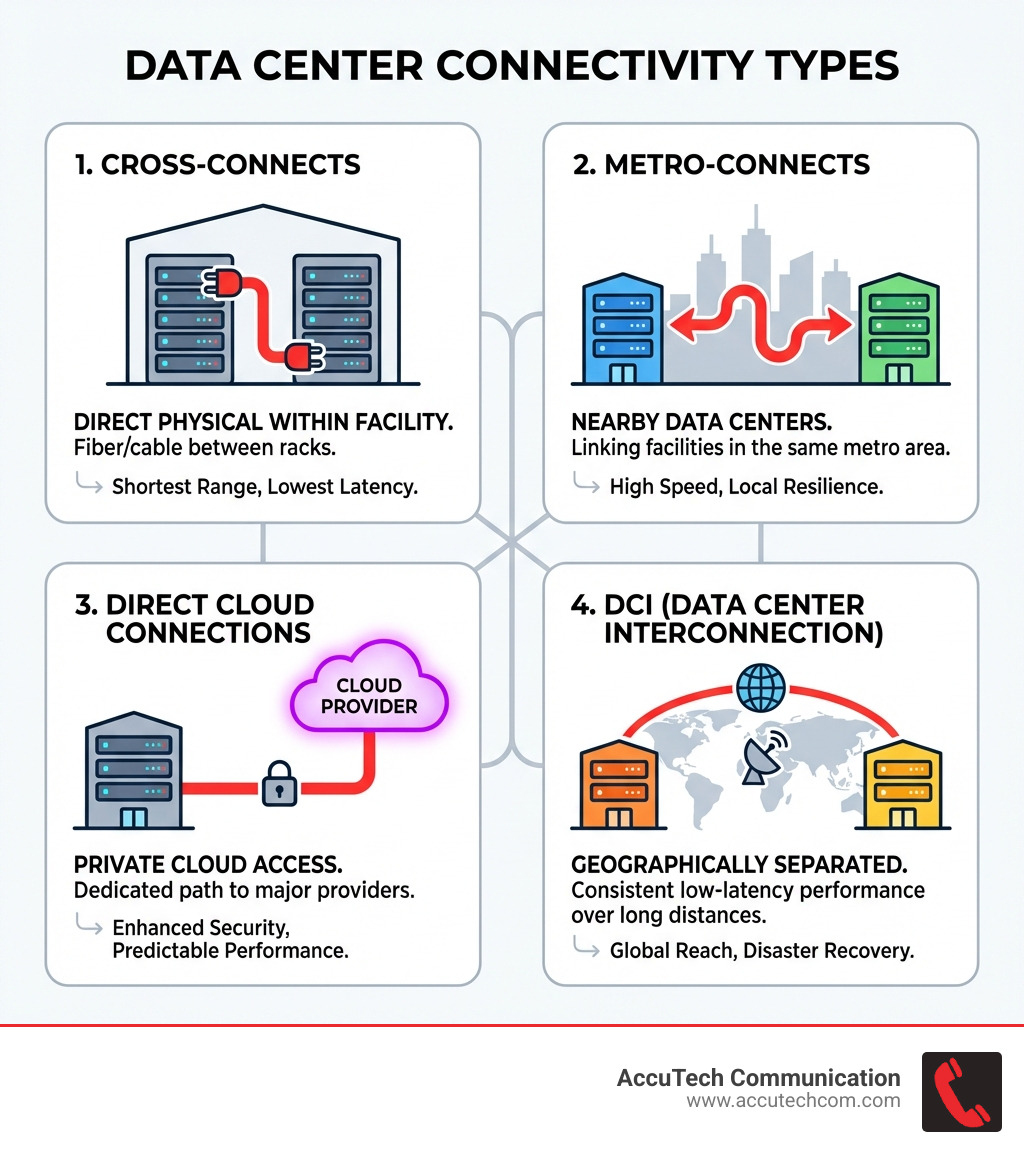

- Common Types: Cross-connects, metro-connects, direct cloud connections, and data center interconnection (DCI)

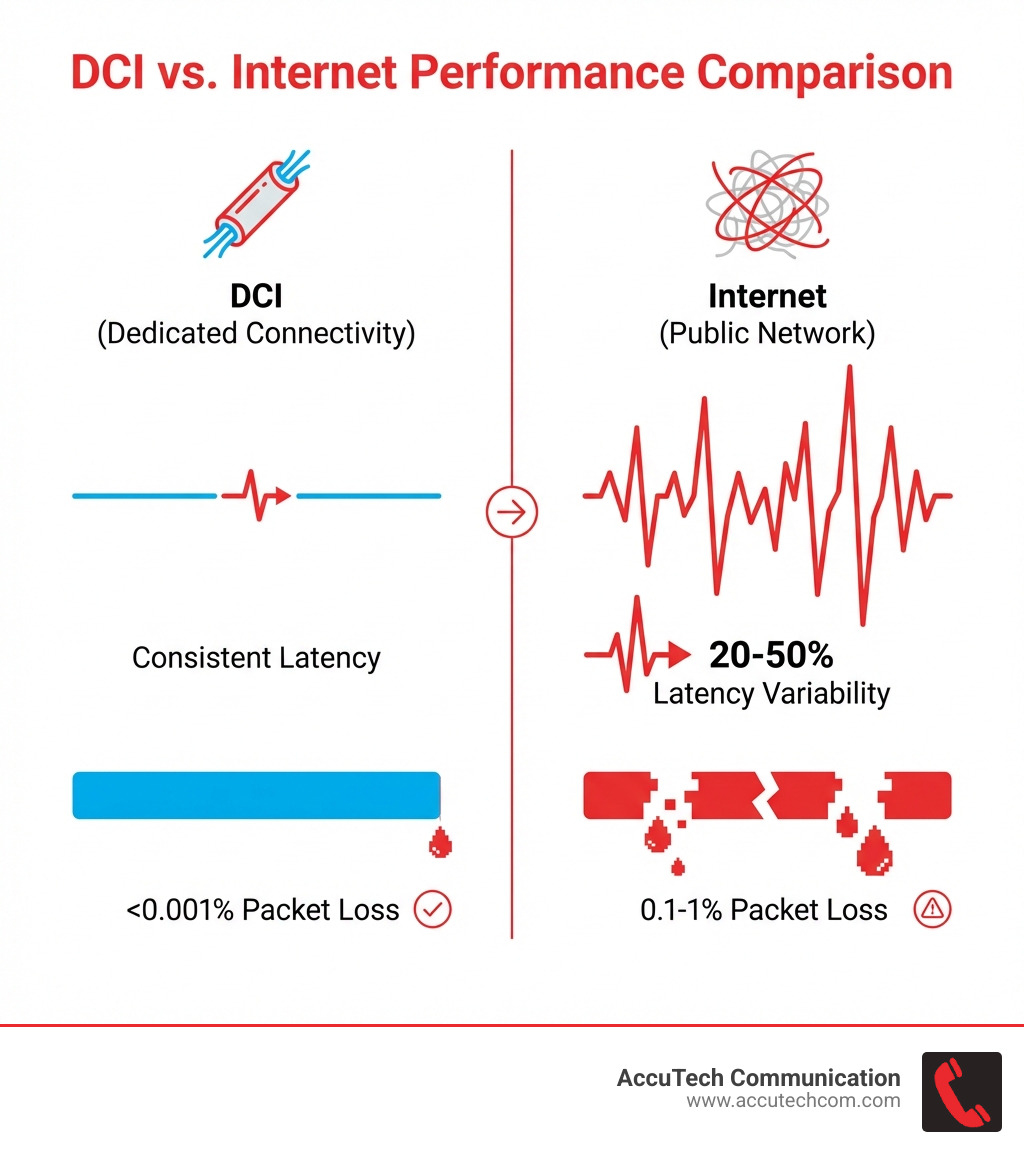

- Performance: DCI delivers packet loss under 0.001% vs 0.1-1% on standard internet connections

When users see loading icons for more than a few seconds, they abandon apps and websites. That’s why data center connectivity has become mission-critical infrastructure.

The difference between standard internet and dedicated data center connectivity is substantial. Internet connections experience latency swings of 20-50%, while dedicated DCI maintains consistent performance. For applications requiring real-time data synchronization, this consistency makes or breaks operations.

I’m Corin Dolan, owner of AccuTech Communications, and I’ve been designing and implementing data center connectivity solutions for commercial clients across Massachusetts, New Hampshire, and Rhode Island since 1993. Throughout my career, I’ve seen how proper connectivity infrastructure transforms business operations by eliminating downtime and enabling seamless disaster recovery strategies.

Data center connectivity terms to learn:

Fundamental Types of Data Center Connectivity

In our experience serving businesses in Metro-west Boston and Worcester, MA, we’ve found that most enterprises require a hybrid approach to connectivity. Understanding the physical and virtual “plumbing” of your data center is the first step toward building a resilient network.

Cross-Connects: The Direct Physical Link

A cross-connect is a direct physical connection between two different environments within the same data center. Typically using fiber optic cable, these offer the lowest possible latency and the highest reliability because the data never leaves the building. It’s a point-to-point connection that provides guaranteed bandwidth, making it ideal for high-frequency trading or private data exchange between partners.

Metro-Connects and Campus Connectivity

When your infrastructure is spread across multiple buildings in a city like Waltham or Woburn, MA, metro-connects bridge the gap. These high-speed links connect data centers within the same metropolitan area. They allow you to treat separate facilities as a single, unified campus.

Direct Cloud Connections

To avoid the unpredictable “weather” of the public internet, businesses use direct cloud connections (like Azure ExpressRoute or AWS Direct Connect). These provide a private on-ramp to major cloud providers, ensuring that your data center infrastructure management remains secure and performant.

Data Center Interconnection (DCI) vs. Standard Internet

Many businesses ask us why they should invest in DCI when they already have a high-speed business internet plan. The answer lies in the “Three Pillars”: Performance, Security, and Cost.

Performance and Packet Loss

Standard internet connections are subject to congestion and “best-effort” routing. This leads to packet loss, which typically runs between 0.1% and 1% under normal conditions. In contrast, Data center connectivity solutions like DCI circuits commonly deliver packet loss under 0.001%. This is vital for building a data center environment that supports high-speed data transmission.

Enhanced Security Through Isolation

Because DCI uses private, dedicated paths, your data is never exposed to the public internet. This reduces the attack surface for your organization. For businesses in regulated industries in Rhode Island and Massachusetts, this physical isolation is a key component of compliance.

The Cost Crossover Point

While DCI has a higher upfront cost, there is a crossover point—usually around 10 Gbps—where dedicated data center connectivity becomes more cost-effective per megabit than scaling traditional internet bandwidth.

Layer 2 and Layer 3 Data Center Connectivity Options

Choosing between Layer 2 and Layer 3 of the OSI model is one of the most important technical decisions in data center infrastructure design.

Layer 2 DCI: Seamless Extensions

Layer 2 connections act like a long “virtual patch cord.” They allow you to extend your local area network (LAN) across geographically separated sites.

- Best Use Case: Live Virtual Machine (VM) migration and applications that require devices to stay on the same subnet.

- Risk: It extends the broadcast domain, meaning a network loop in one site can crash the network in another.

Layer 3 DCI: Scalable Routing

Layer 3 uses routing protocols to connect sites. This provides better isolation and scalability.

- Best Use Case: Multi-site architectures where you need to load balance traffic and isolate failure domains.

- Advantage: If one site has a network issue, it’s much less likely to affect your other locations.

Dark Fiber vs. Lit Services: Choosing Your Foundation

When we perform a data center build out in Watertown, MA, we always discuss the choice between dark and “lit” fiber.

Dark Fiber: Ultimate Control

Dark fiber is unlit, unmanaged fiber optic cable. You buy or lease the “glass” and provide your own equipment to “light” it.

- Pros: Virtually infinite bandwidth; you can upgrade from 10G to 400G just by changing the transceivers at the ends.

- Cons: Requires significant expertise to manage and higher initial CAPEX for equipment.

Lit Services: Managed Simplicity

Lit services (like Wavelength or Ethernet services) are managed by a provider. They provide a specific amount of bandwidth (e.g., 10 Gbps) and handle the hardware.

- Pros: Lower upfront costs and faster deployment.

- Cons: You are limited by the service level agreement (SLA) and the specific bandwidth you purchased.

Latency Requirements and Geographic Diversity

Latency is the “speed limit” of your network. For businesses in Marlborough or Sudbury, MA, the physical distance between data centers dictates what applications can run.

- Synchronous Data Replication: This requires zero data loss, meaning the data must be written to both sites simultaneously. Most synchronous systems tolerate a maximum of 5-10 milliseconds round-trip latency. This usually limits the distance between facilities to about 50-100 miles.

- Asynchronous Replication: This allows for a slight delay between sites, making it suitable for facilities separated by hundreds or thousands of miles.

- Disaster Recovery Rule of Thumb: The industry standard suggests at least 100+ miles between facilities to ensure that a single regional disaster (like a major storm or power grid failure) doesn’t take out both your primary and backup sites.

The Role of SDN and NFV in Modern Connectivity

Modern data center connectivity is increasingly software-defined. This shift allows for “Connectivity as a Service,” where bandwidth can be provisioned in minutes rather than months.

Software-Defined Networking (SDN)

SDN decouples the network’s control plane from the data plane. This allows us to programmatically route traffic, optimize paths based on real-time congestion, and even route data based on “green” metrics like carbon intensity.

Network Functions Virtualization (NFV)

NFV replaces traditional hardware (like firewalls and load balancers) with virtualized software versions. This is a core part of data center cable installation, as it reduces the physical footprint and power requirements of your network racks.

Optimizing Connectivity for Hybrid and Multi-Cloud

Most of our clients aren’t just in one cloud; they use a mix of on-premise servers, colocation, and multiple cloud providers.

- Cloud-Neutral Interconnection: Don’t get locked into a single provider’s ecosystem. Use a carrier-neutral data center to create a “network fabric” that connects all your clouds.

- Redundant Paths: Ensure you have physical diversity. Two fiber lines in the same trench are not truly redundant.

- Structured Cabling: Avoid “spaghetti” wiring. High-density MTP/MPO connectors and proper datacenter cable management are essential for scaling these complex environments.

Frequently Asked Questions about Data Center Connectivity

What is the industry standard for data center uptime?

The current industry standard for a Tier 4 data center is 99.999% uptime, which allows for only about 5 minutes of downtime per year. Achieving this requires redundant power, cooling, and highly resilient data center connectivity.

How does edge computing affect data center connectivity?

Edge computing moves processing closer to the user to reduce latency. This requires a proliferation of compact edge data centers connected back to a core “brain” via high-speed DCI links. Enterprises using edge sites can see latency reductions of 12 to 30 times compared to centralized hyperscale clouds.

What is the difference between intra-data center and inter-data center connectivity?

Intra-data center connectivity refers to the cabling and switches inside a single building (usually distances under 2km). Inter-data center connectivity (DCI) refers to the links between different buildings or cities.

Conclusion

Building a robust data center connectivity strategy is no longer just an IT requirement—it’s a business imperative. Whether you are planning a data center relocation in Boston or a data center build out in Brockton, MA, the choices you make regarding fiber types, latency, and redundancy will define your company’s digital resilience for years to come.

At AccuTech Communications, we specialize in making these complex transitions simple. From initial data center infrastructure design to the final data center move checklist, we provide the certified expertise businesses in Massachusetts, New Hampshire, and Rhode Island need to stay connected.

Ready to optimize your infrastructure? Contact our team today to discuss your specific data center connectivity needs and ensure your business is ready for the high-speed demands of 2025 and beyond.